Check for errors (all values should be 3. Wait for GPU to finish before accessing on host Kernel function to add the elements of two arrays

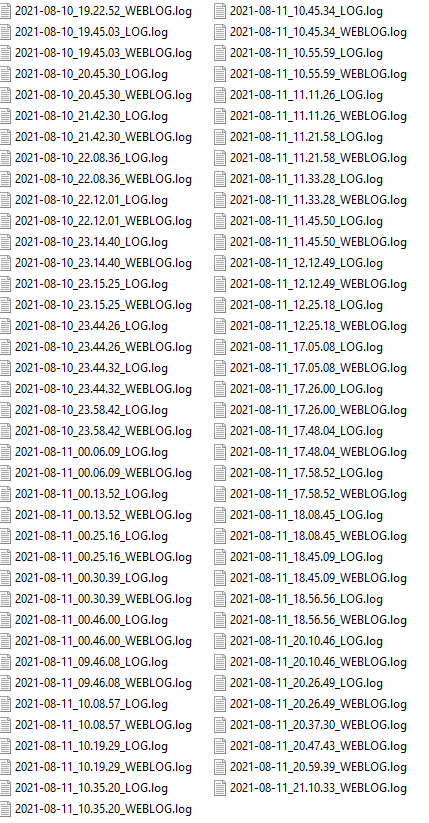

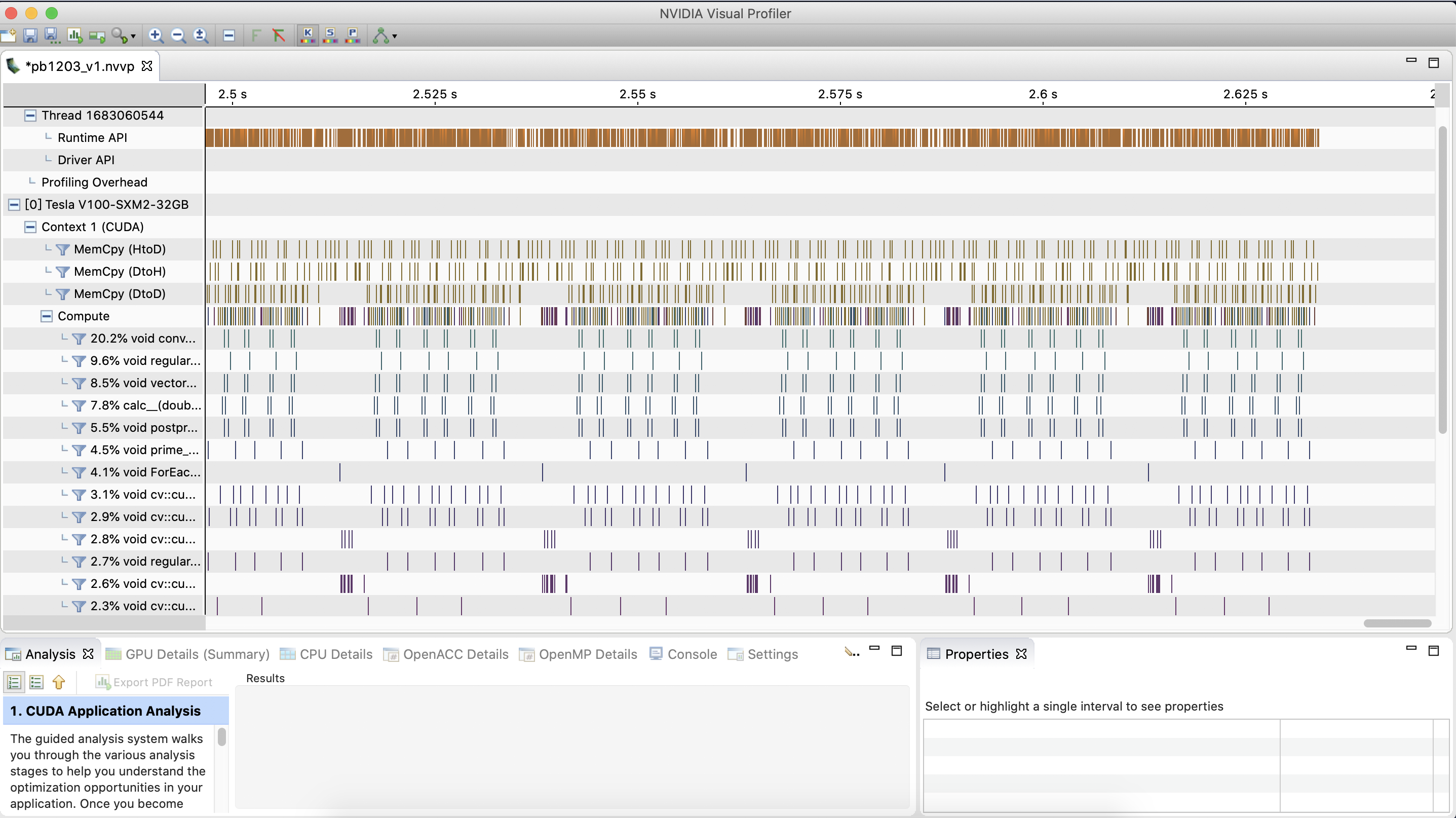

The code is, as mentioned copied from a tutorial to test if Cuda is working, though I also have included calls to cudaProfilerStop() and cudaDeviceReset() for clarity, it is here included: #include It is Nvidias Profiler, profiles any executable including CUDA programs. This has not solved the original problem, and has in fact created a new error the same happens when cudaProfilerStop() is used on its own or alongside cuProfilerStop() and cudaDeviceReset() The code That means that the CPU thread launches the kernel but does not wait for the kernel to complete. =12558= Warning: Some profiling data are not recorded. Note that you mention NVPROF but the pictures you are showing are from nvvp - the visual profiler. Yields a program which can still run, but which, when uppon which nvprof is run, returns: =12558= NVPROF is profiling process 12558, command. When profiling for GPUs, AMReX recommends nvprof, NVIDIAs visual profiler. a.out 2 12260 NVPROF is profiling process 12260, command. Trying to adhere to the warning at the end does not solve the problem:Īdding call to cudaProfilerStop() (or cuProfilerStop()), and also adding cudaDeviceReset() at end as suggested and linking the appropriate library ( cuda_profiler_api.h or cudaProfiler.h) and compiling with > nvcc cuda_test.cu -o cuda_test -lcuda When tracking down a CUDA launch error, Gpu::synchronize(). Is there any way I can get either nvprof to display the expected output? Edit Visual Profiler for application profiling. nvprof -metrics all -log-file log.txt -csv -profile-api-trace none myapp.exe I get about 120 lines of output for the. Here is one o I am using this command to generate the metrics for 1 kernel. nvprof is a command line tool bundled with CUDA Toolkit that enables you to collect and view profiling data, i.e., a timeline of CUDA-related activities on both CPU and GPU. nvprof -metrics all -log-file log.txt -csv -profile-api-trace none myapp.exe I get about 120 lines of output for the performance counters. I have found a discussion about the same error here, but there the answer was that the wrong version of Cuda was installed, and in my case, the version installed is the latest version installed through the systems package manager ( Version 10.1.243-1) nvprof for application profiling across GPU and CPU. The Achieved Occupancy Profile mode experiment measures occupancy during execution of the kernel, and adds the achieved values to the Occupancy experiment detail pane alongside the theoretical values. The latter warning is not my main problem or the topic of my question, my problem is the message saying that No Kernels were profiled and no API activities were profiled.ĭoes this mean that the program was run entirely on my CPU? or is it an error in nvprof? Make sure cudaProfilerStop() or cuProfilerStop() is called before application exit to flush profile data. vectorAdd Vector addition of 50000 elements 22918 NVPROF is profiling process 22918, command. =3201= Warning: Some profiling data are not recorded. Hi, I am having problems with profiling any application on Ubuntu 20.04 with WSL2 host. I get result: =3201= NVPROF is profiling process 3201, command. But when I try to run the Cuda profiler on the program: > sudo nvprof. In either case, the program can run, and I get no errors (both as in the program doesn't crash and the output is that there were no errors). I simply copy-paste the code from this tutorial (Both the one using one and more kernels) into a file titled cuda_test.cu and run > nvcc cuda_test.cu -o cuda_test pytorch 1.0.1 p圓.6_cuda10.0.130_cudnn7.4.I have recently installed Cuda on my arch-Linux machine through the system's package manager, and I have been trying to test whether or not it is working by running a simple vector addition program. This seems wrong, am I misusing the API or is there some other problem? Opening nvvp, I see that the kernels runing on the 5 streams one after the other, instead of all at the same time. usr/local/cuda/bin/nvprof -concurrent-kernels on -print-api-summary -print-gpu-summary -output-profile profile.nvvp -f -profile-from-start off -track-memory-allocations on -demangling on -trace gpu,api python stream.py Running nvprof with the following command:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed